View on GitHub

Open this notebook in GitHub to run it yourself

Quantum Hadamard Edge Detection for Image Processing

Based on the paper: “Edge Detection Quantumized: A Novel Quantum Algorithm for Image Processing” https://arxiv.org/html/2404.06889v1 This notebook demonstrates:- QPIE (Quantum Probability Image Encoding) encoding

- QHED (Quantum Hadamard Edge Detection) algorithm

Output:

QPIE Encoding Implementation

Convert an image into valid QPIE probability amplitudes. The image is converted to grayscale if needed, made non-negative, and L2-normalized so the sum of squared values equals- The result is stored as an array

IMAGE_DATA.

Output:

Output:

Modified QHED Algorithm

We define anImagePixel QStruct with separate x and y registers, and load the image via lookup_table — the amplitudes are computed classically from the pixel coordinates and loaded with prepare_amplitudes.

The QHED algorithm detects edges by:

- Adding auxiliary qubits in state

- Controlled shifts of (horizontal) and (vertical) by

- Applying Hadamard to compute differences

- Measuring to get edge information

Output:

Synthesize and Analyze the Quantum Circuit

The model is synthesized with a 20-qubit width limit and a long timeout, and finally exported as quantum_image_edge_detection with 15-digit numeric precision.Output:

Output:

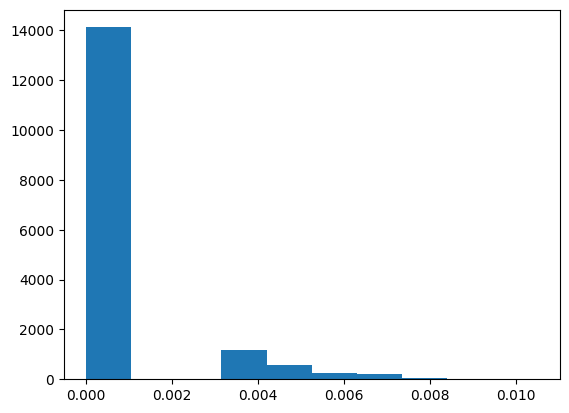

| pixel.x | pixel.y | edge_aux | counts | probability | bitstring | |

|---|---|---|---|---|---|---|

| 0 | 64 | 92 | 0 | 167 | 0.000835 | 010111001000000 |

| 1 | 37 | 89 | 0 | 165 | 0.000825 | 010110010100101 |

| 2 | 64 | 91 | 0 | 163 | 0.000815 | 010110111000000 |

| 3 | 62 | 91 | 0 | 161 | 0.000805 | 010110110111110 |

| 4 | 70 | 95 | 0 | 158 | 0.000790 | 010111111000110 |

| … | … | … | … | … | … | … |

| 17277 | 115 | 127 | 1 | 1 | 0.000005 | 111111111110011 |

| 17278 | 118 | 127 | 1 | 1 | 0.000005 | 111111111110110 |

| 17279 | 119 | 127 | 1 | 1 | 0.000005 | 111111111110111 |

| 17280 | 120 | 127 | 1 | 1 | 0.000005 | 111111111111000 |

| 17281 | 122 | 127 | 1 | 1 | 0.000005 | 111111111111010 |

17282 rows × 6 columns

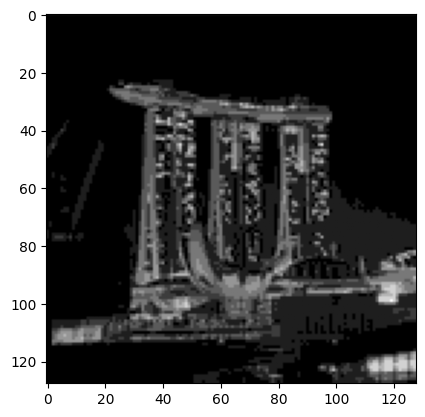

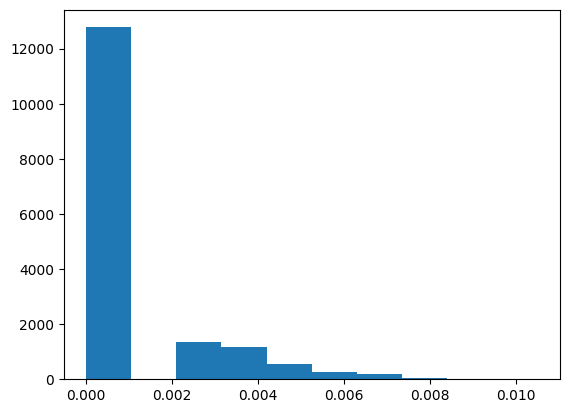

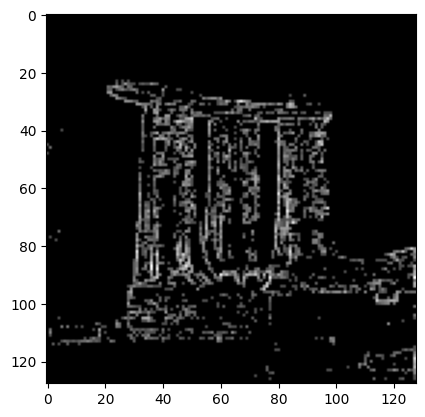

Create Edge Image From Measurement Results

Ifedge_aux == 1 then it is marked as an edge pixel.

The new amplitude is calculated based on the number of shots measured for that pixel, normalized by the total number of shots.

Output:

Output:

Output:

Output: